Large language models like the one powering ChatGPT have the ability to generate thousands of words within a single minute and can quickly make sense of long inputs. Unlike humans, the chatbot doesn’t process text as individual sentences or words, but instead uses tokens to decode and output human languages like English, Spanish, and others. This article will explain how ChatGPT tokens work, why they are necessary, and how they affect chatting experiences.

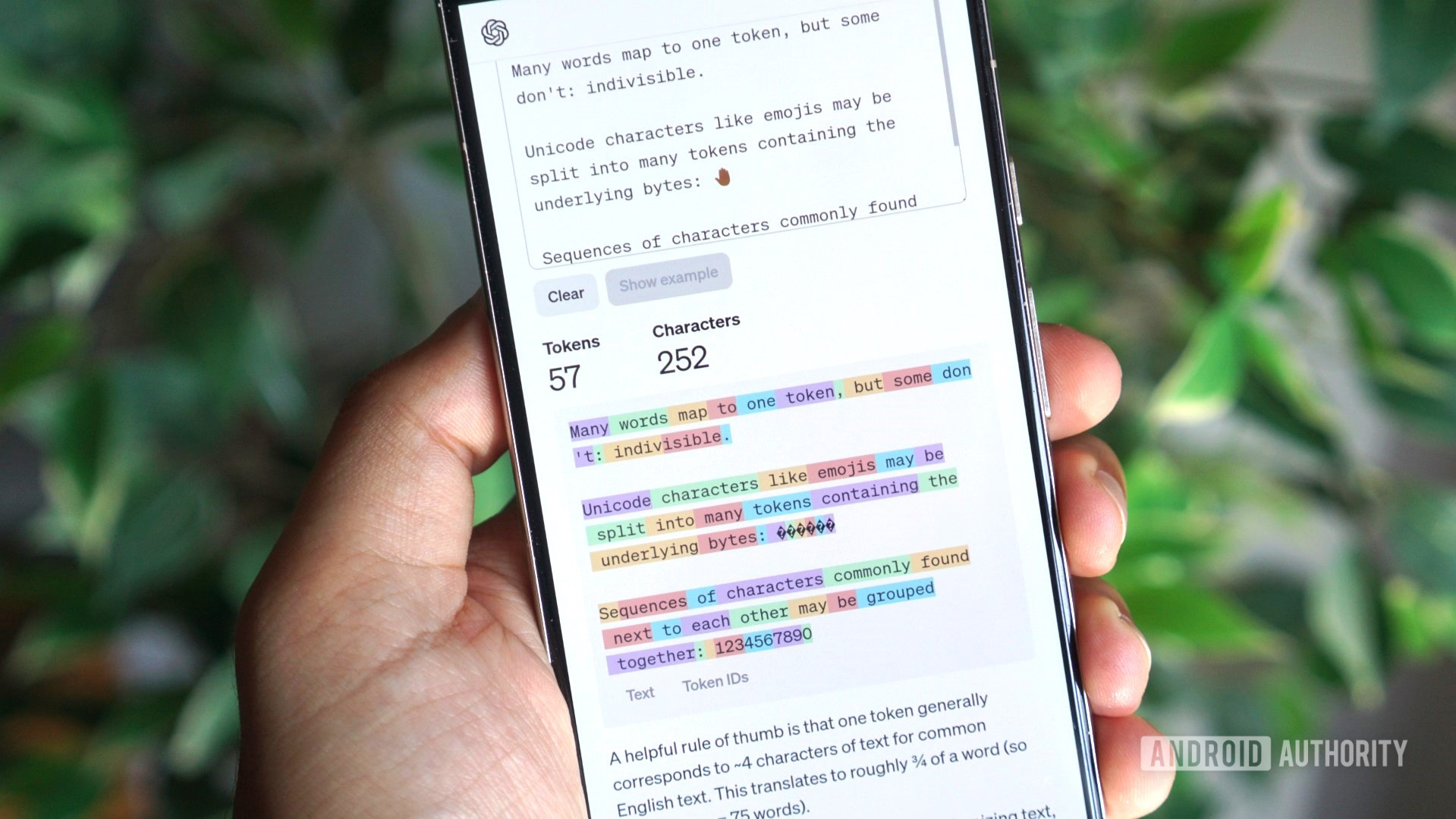

Tokens are the building blocks of any ChatGPT text response. The model looks for predictable combinations of letters and groups them together to form a token. For example, the word “air” forms a single token due to its frequency in everyday language and the model’s familiarity with it. A single English word can take up anywhere between 1 to 3 tokens. Tokens play a role in determining ChatGPT’s character limit and the language model’s “context window,” which refers to its memory limitations based on the number of tokens it can hold.

Counting tokens in ChatGPT depends on factors such as word length, punctuation, numbers, spaces, and foreign languages. The token limit in ChatGPT varies depending on the model used and how it is interacted with. The cost per ChatGPT token also varies depending on the model, with newer models like GPT-4 Turbo offering higher quality responses and being more computationally efficient.

For developers and experimental users, the OpenAI Playground allows interaction with the underlying language model directly, where each message sent and received is billed according to the associated costs. OpenAI suggests that 1,000 tokens roughly corresponds to 750 words of text.

ChatGPT also has a limit on the number of messages per hour, known as the rate limit, and the ChatGPT API varies in cost depending on the language model chosen.